The timing advance processor plays a critical and often overlooked role in keeping modern wireless communication networks perfectly synchronized. Furthermore, it ensures that signals from multiple mobile devices arrive at base stations without colliding or overlapping destructively. Therefore, understanding this component helps engineers, students, and tech enthusiasts appreciate how wireless networks maintain remarkable reliability. Moreover, this technology quietly enables billions of daily calls, messages, and data transfers across the entire globe simultaneously.

What Is a Timing Advance Processor?

A timing advance processor is a specialized computational component that calculates and manages transmission timing adjustments in wireless networks. Furthermore, it compensates for the propagation delay that occurs when signals travel between mobile devices and base stations. Moreover, without precise timing control, multiple users sharing the same frequency channel would interfere with each other catastrophically. Consequently, this processor acts as the network’s internal timekeeper, maintaining order across thousands of simultaneous wireless transmissions efficiently.

The Core Problem It Solves

Mobile devices sit at varying distances from their serving base stations, creating unequal signal travel times naturally. Furthermore, a device two kilometers away experiences significantly greater propagation delay than one sitting fifty meters away. Additionally, in time-division multiple access systems, each device occupies a specific time slot for transmission without overlapping. Therefore, without compensating for distance-related delays, transmissions from far devices would collide with those from nearby devices constantly.

Where the Processor Fits in Network Architecture

The timing advance processor integrates directly within the base station’s baseband processing unit at the physical layer. Furthermore, it communicates continuously with the radio frequency frontend and the medium access control layer above it. Moreover, in modern LTE and 5G systems, this function distributes across dedicated hardware accelerators and software-defined processing units. Consequently, its precise position within the architecture determines how quickly and accurately the network responds to changing device distances.

The Historical Development of Timing Advance Technology

Timing advance concepts emerged alongside the first generation of cellular networks during the early 1980s globally. Furthermore, GSM networks formally introduced structured timing advance mechanisms in their technical specifications during the late 1980s. Moreover, as networks evolved from 2G through 3G, 4G, and into 5G, timing advance processing became dramatically more sophisticated. Therefore, tracing this evolution reveals how fundamental timing control is to the entire history of cellular network design.

Timing Advance in GSM Networks

GSM networks used a relatively simple timing advance mechanism with values ranging from zero to sixty-three discrete steps. Furthermore, each step represented approximately 3.69 microseconds of timing adjustment corresponding to roughly 553 meters of distance. Additionally, the base station measured uplink signal timing and commanded devices to advance their transmission timing accordingly. Consequently, this simple but effective system kept uplink transmissions aligned within GSM’s time-division multiple access frame structure reliably.

Evolution Through UMTS and HSPA

Third-generation UMTS networks introduced closed-loop timing control that updated adjustments more frequently than GSM allowed. Furthermore, the increased data rates of HSPA demanded tighter synchronization tolerances that earlier timing mechanisms could not satisfy. Moreover, dedicated timing advance channels emerged to carry adjustment commands without consuming valuable data channel resources unnecessarily. Therefore, each network generation brought increasingly precise timing requirements that pushed processor design toward greater sophistication constantly.

The LTE Revolution in Timing Precision

LTE networks dramatically increased timing advance precision requirements due to their orthogonal frequency division multiple access structure. Furthermore, OFDMA systems suffer severely from inter-user interference when uplink timing alignment exceeds a fraction of the cyclic prefix duration. Additionally, LTE specifies timing advance values with 16-sample resolution at a 30.72 MHz sampling rate for exceptional precision. Consequently, LTE timing advance processors must operate with sub-microsecond accuracy that earlier generation systems never demanded.

How the Timing Advance Processor Actually Works

Understanding the operational mechanics of this processor requires examining its key functional steps from measurement to command delivery. Furthermore, the entire process happens continuously in the background while users browse, stream, and communicate without interruption. Moreover, modern implementations complete full timing measurement and correction cycles within milliseconds to track moving devices. Therefore, the processor’s speed and accuracy directly determine how well the network serves users experiencing varying signal conditions.

Measuring Uplink Signal Arrival Time

The processor first measures the precise moment when uplink signals from each mobile device arrive at the base station antenna. Furthermore, it compares this measured arrival time against the expected arrival time defined by the network’s frame timing reference. Moreover, the difference between expected and actual arrival times directly reveals the excess propagation delay for each device. Consequently, this measurement forms the raw input data from which the processor calculates the necessary timing correction value.

Calculating the Correct Timing Advance Value

After measuring the timing error, the processor converts that measurement into a specific timing advance command value. Furthermore, larger distances between devices and base stations produce larger timing errors requiring greater advance adjustments accordingly. Moreover, the processor applies mathematical formulas that account for signal propagation speed, approximately 300,000 kilometers per second. Therefore, the calculation transforms a raw timing error measurement into a precise command that the device can directly implement.

Delivering Timing Advance Commands to Devices

The base station transmits calculated timing advance commands to each mobile device through dedicated downlink control channels. Furthermore, devices receive these commands and adjust their uplink transmission timing by the specified amount immediately. Additionally, the processor monitors whether each device’s timing improves after applying the commanded adjustment and recalculates if necessary. Consequently, this closed-loop feedback system continuously tracks and corrects timing for every active device within the cell simultaneously.

Handling Device Mobility and Changing Distances

Moving devices continuously change their distance from the base station, requiring frequent timing advance updates accordingly. Furthermore, a vehicle traveling at highway speeds can change its propagation delay measurably within just a few transmission frames. Moreover, the processor tracks each device’s timing trend and anticipates future corrections based on observed movement patterns. Therefore, predictive timing algorithms within modern processors significantly reduce the lag between distance changes and corrective command delivery.

Timing Advance Processor in LTE Networks

LTE represents the environment where timing advance processing faces its most demanding and precisely defined operational requirements. Furthermore, the OFDMA uplink scheme used by LTE imposes strict orthogonality requirements that depend entirely on precise timing alignment. Moreover, LTE specifications define timing advance adjustment granularity at levels that require genuinely sophisticated processing capabilities. Consequently, LTE base stations dedicate substantial computational resources specifically to timing advance measurement and control functions.

The LTE Random Access Procedure

Every LTE device establishes its initial timing alignment through a structured random access procedure before transmitting any data. Furthermore, the device transmits a random access preamble that the base station uses to estimate the initial propagation delay. Moreover, the base station responds with a random access response containing the initial timing advance value for that device. Therefore, this initial alignment procedure establishes the timing baseline from which all subsequent fine adjustments build progressively.

Closed-Loop Timing Adjustment in LTE

After initial alignment, LTE maintains timing through a continuous closed-loop process driven by regular uplink measurements. Furthermore, the base station measures timing error on every uplink subframe and generates correction commands as needed. Additionally, LTE timing advance commands specify adjustments in units of 16 time samples at the 30.72 MHz clock rate. Consequently, LTE achieves timing accuracy that keeps uplink interference below levels that would meaningfully degrade system capacity or coverage.

Timing Advance and Cell Size Limitations

The maximum timing advance value a system supports directly limits the maximum physical size of each cell. Furthermore, LTE supports timing advance values up to 667 microseconds, corresponding to a maximum cell radius of roughly 100 kilometers. Moreover, extended coverage scenarios in rural deployments push timing advance processors toward their operational boundaries regularly. Therefore, network planners must consider timing advance limits when designing cell sizes for both urban density and rural coverage simultaneously.

Timing Advance Processor in 5G Networks

Fifth-generation networks introduce new timing challenges that exceed everything previous cellular generations ever encountered. Furthermore, 5G’s massive MIMO antennas, millimeter-wave frequencies, and ultra-dense deployments each create unique timing synchronization demands. Moreover, network slicing and heterogeneous network architectures require timing advance processors that adapt dynamically to varying operational contexts. Consequently, 5G timing advance processing represents a significant leap in computational complexity compared to all previous generations.

Numerology and Flexible Subcarrier Spacing

5G introduces flexible numerology, meaning subcarrier spacing can vary between 15 kHz and 240 kHz across different deployments. Furthermore, different subcarrier spacings create different cyclic prefix durations, which directly affect timing alignment tolerance requirements. Additionally, higher subcarrier spacings used at millimeter-wave frequencies demand proportionally tighter timing accuracy from the processor. Therefore, 5G timing advance processors must dynamically adjust their precision requirements based on the active numerology configuration operating.

Timing Advance in Millimeter-Wave Deployments

Millimeter-wave 5G cells operate at extremely short ranges, typically covering areas measured in hundreds of meters rather than kilometers. Furthermore, despite the shorter ranges, the extremely tight timing tolerances at millimeter-wave frequencies demand highly precise measurement systems. Moreover, the dense deployment of millimeter-wave small cells creates complex handover scenarios requiring rapid timing re-establishment between cells. Consequently, timing advance processors in millimeter-wave deployments must execute measurement and adjustment cycles at unprecedented speeds constantly.

Integrated Access and Backhaul Timing Considerations

5G introduces integrated access and backhaul, where the same radio links serve both user devices and network interconnection simultaneously. Furthermore, this dual role creates complex timing relationships that the processor must manage across multiple simultaneous reference points. Additionally, synchronization between access and backhaul timing domains requires coordination algorithms that earlier processors never needed to implement. Therefore, 5G timing advance processors incorporate significantly more sophisticated multi-domain synchronization logic than any previous generation required.

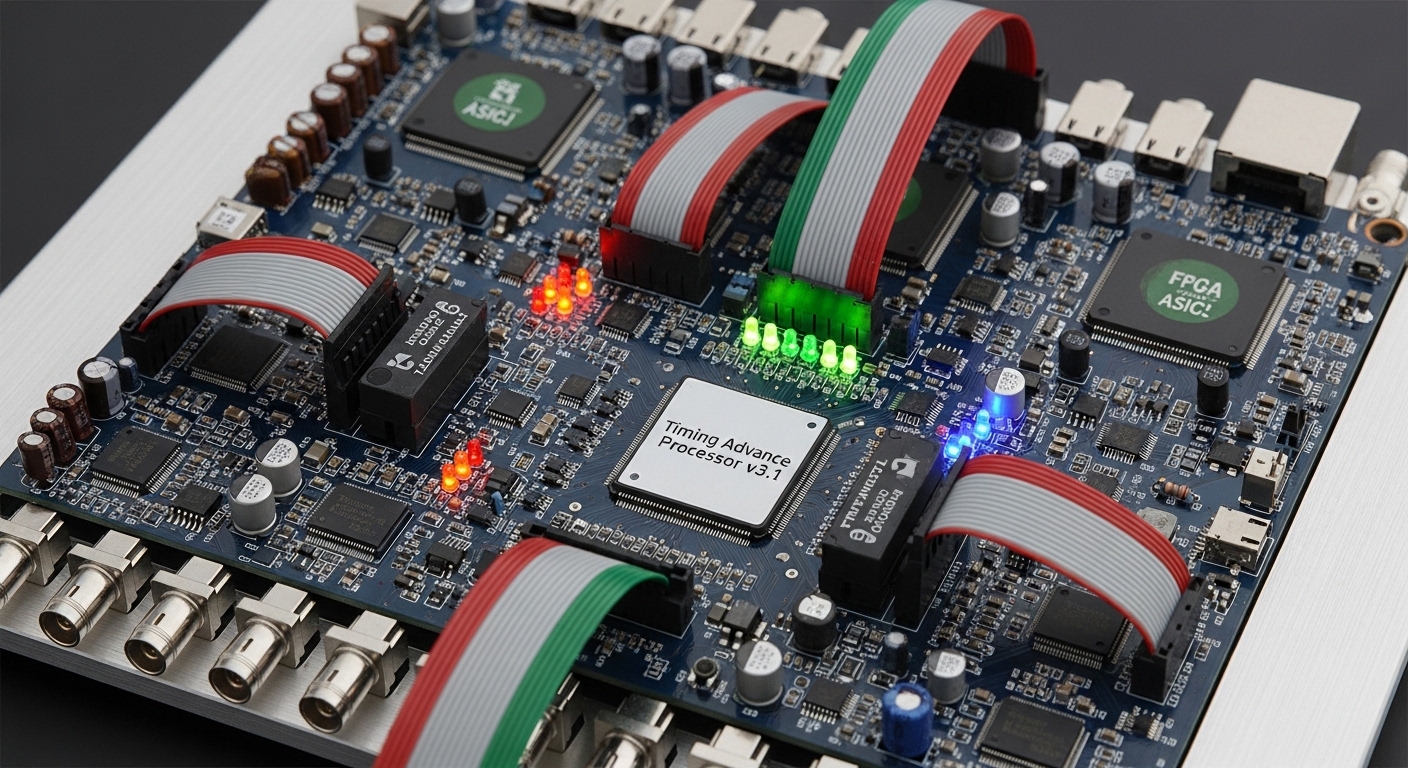

Hardware Implementation of Timing Advance Processors

Real-world timing advance processors combine dedicated hardware accelerators with programmable processing elements for maximum flexibility. Furthermore, field-programmable gate arrays provide the low-latency measurement capabilities that software implementations simply cannot match adequately. Moreover, digital signal processors handle the mathematical calculations involved in converting timing measurements into correction commands. Consequently, modern base station designs partition timing advance functions across multiple specialized hardware elements working in tight coordination.

FPGA-Based Timing Measurement Units

FPGAs perform the time-critical sample-level timing measurements that form the foundation of the entire process. Furthermore, their parallel processing architecture allows simultaneous timing measurement across dozens or hundreds of uplink resource blocks. Additionally, FPGA logic implements correlation algorithms that extract precise timing information from received uplink reference signals effectively. Therefore, the FPGA measurement unit delivers the raw timing data that downstream processors transform into actionable correction commands.

DSP Processing for Timing Calculations

Digital signal processors receive raw timing measurements and execute the mathematical operations needed to generate correction values. Furthermore, DSPs handle filtering algorithms that smooth noisy timing measurements before the system derives correction commands from them. Moreover, DSP software implements prediction algorithms that anticipate timing changes based on observed device velocity and trajectory. Consequently, DSP-based calculation units translate raw measurements into accurate, stable timing advance commands that devices can reliably follow.

Software-Defined Radio Implementations

Modern software-defined radio platforms increasingly implement timing advance functions through optimized software running on general processors. Furthermore, advances in processor speed and specialized instruction sets now make software timing implementations viable for many deployments. Additionally, software implementations offer rapid feature updates and algorithm improvements without requiring hardware replacement in the field. Therefore, the industry trend toward software-defined networking gradually extends into timing advance processing with growing success rates.

Timing Advance and Network Performance Metrics

The quality of timing advance processing directly and measurably influences several key network performance indicators simultaneously. Furthermore, poor timing alignment degrades uplink signal quality, reduces throughput, and increases interference between adjacent users. Moreover, excellent timing advance control contributes to higher spectral efficiency, better coverage, and improved user experience consistently. Consequently, network operators monitor timing advance performance as a meaningful indicator of overall uplink system health.

Impact on Uplink Throughput

Precise timing alignment maximizes the orthogonality between uplink transmissions, directly supporting maximum achievable uplink throughput. Furthermore, even small timing misalignments introduce inter-user interference that the base station receiver must work harder to suppress. Additionally, throughput degradation becomes especially noticeable at cell edges where propagation delays are largest and most variable. Therefore, operators prioritizing uplink throughput invest in timing advance processors capable of maintaining tighter alignment tolerances reliably.

Effect on Cell Edge Performance

Users at the edges of cell coverage areas present the greatest timing advance challenges due to large propagation delays. Furthermore, these users require the largest timing advance values and benefit most from accurate and responsive processor performance. Moreover, improved timing advance accuracy at cell edges directly translates into better service for the most challenging user locations. Consequently, timing processor performance at extreme timing advance values becomes a critical differentiator between competing base station implementations.

Timing Accuracy and Interference Management

In dense network deployments, timing misalignment in one cell can create interference that affects neighboring cells adversely. Furthermore, coordinated multipoint transmission schemes require extremely precise timing alignment across multiple base stations simultaneously. Additionally, inter-cell interference coordination algorithms assume accurate timing as a prerequisite for their mathematical models to function. Therefore, timing advance processor accuracy has network-wide implications that extend well beyond the individual cell it directly serves.

Timing Advance in Positioning and Location Services

Network operators and researchers increasingly leverage timing advance measurements for mobile device positioning applications. Furthermore, timing advance values directly encode distance information between devices and base stations in measurable physical units. Moreover, combining timing advance measurements from multiple base stations enables triangulation-based location estimation with useful accuracy. Consequently, this secondary application of timing advance data creates additional value from measurements the network already collects for synchronization purposes.

Observed Time Difference of Arrival

OTDOA positioning uses differences in signal arrival times measured at multiple points to calculate device location geometrically. Furthermore, timing advance processors contribute to OTDOA by providing precise arrival time measurements from each participating base station. Additionally, combining measurements from three or more base stations typically yields location accuracy of tens of meters in favorable conditions. Therefore, the timing advance processor serves dual roles as both a synchronization engine and a positioning measurement source simultaneously.

Timing Advance for Emergency Location Services

Regulatory requirements in many countries mandate that networks provide location information for emergency service calls. Furthermore, timing advance-based positioning offers a network-side location method that functions without requiring any device-side positioning capability. Moreover, even coarse timing advance-based location estimates significantly improve emergency responder dispatch compared to no location information. Consequently, timing advance processors contribute directly to public safety outcomes that most users never consciously consider or appreciate.

Challenges and Future Directions in Timing Advance Processing

Engineers continuously face new challenges as network densification, higher frequencies, and new use cases push timing advance systems further. Furthermore, ultra-dense deployments with overlapping small cells create complex multi-layer timing environments that require innovative management approaches. Moreover, the introduction of non-terrestrial networks including satellites and high-altitude platforms creates timing advance challenges on entirely new scales. Therefore, ongoing research into timing advance processing addresses scenarios that existing specifications and hardware designs never originally anticipated.

Non-Terrestrial Network Timing Challenges

Satellite-based 5G nodes orbit hundreds or thousands of kilometers above Earth, creating propagation delays measured in milliseconds. Furthermore, existing timing advance value ranges and update rates prove completely inadequate for these extreme distance scenarios. Additionally, researchers develop extended timing advance mechanisms and pre-compensation techniques that devices apply based on satellite position data. Consequently, non-terrestrial network support represents perhaps the most dramatic expansion of timing advance processing requirements in cellular history.

Machine Learning for Timing Prediction

Researchers actively explore machine learning algorithms that predict timing advance requirements based on device movement patterns. Furthermore, neural networks trained on historical mobility data can anticipate timing changes before measurements confirm them directly. Moreover, predictive timing approaches reduce the lag between position changes and corrective adjustments, improving fast-moving device performance. Therefore, machine learning integration into timing advance processors represents a genuinely promising direction for next-generation network performance improvement.

Ultra-Reliable Low-Latency Communication Requirements

Industrial automation and autonomous vehicle applications demand network reliability and latency far beyond consumer communication requirements. Furthermore, timing advance processors serving these applications must operate with zero tolerance for alignment errors that consumers might never notice. Additionally, functional safety requirements in industrial deployments demand redundant timing measurement and correction pathways for guaranteed reliability. Consequently, specialized timing advance processor designs for ultra-reliable applications represent an emerging and rapidly growing development area.

Practical Implications for Network Engineers

Network engineers who deeply understand timing advance processing make better decisions during network planning, deployment, and optimization. Furthermore, recognizing timing advance limitations helps engineers avoid configurations that push systems beyond their reliable operational boundaries. Moreover, timing advance statistics available in network management systems reveal valuable insights about cell loading, coverage, and device distribution. Therefore, building strong timing advance expertise genuinely improves an engineer’s effectiveness across a wide range of practical network tasks.

Reading Timing Advance Statistics

Network management systems report timing advance distributions showing what percentage of devices use each timing advance range. Furthermore, a heavily right-skewed distribution suggests many cell-edge users who may benefit from additional coverage infrastructure. Additionally, sudden changes in timing advance distributions can indicate antenna tilt changes, environmental obstructions, or feeder cable issues. Consequently, regular monitoring of timing advance statistics gives engineers early warning of developing coverage or performance problems.

Optimizing Cell Parameters Using Timing Data

Engineers use timing advance data to optimize antenna tilt, transmit power, and handover thresholds for better performance. Furthermore, cells showing unexpectedly large timing advance values may need antenna downtilt to reduce their coverage footprint. Additionally, timing advance measurements help engineers validate whether newly installed sites cover their intended geographic service areas. Therefore, timing advance data serves as a practical and readily available tool for continuous network performance optimization activities.

Final Thoughts on the Timing Advance Processor

The timing advance processor represents a fundamental enabler of reliable wireless communication that rarely receives the recognition it deserves. Furthermore, its invisible operation underlies every successful mobile data session, voice call, and wireless interaction happening globally. Moreover, as networks evolve toward 5G, 6G, and non-terrestrial architectures, timing advance processing will grow even more critical. Therefore, engineers, researchers, and technology enthusiasts who master this concept gain genuinely valuable insight into how modern wireless networks truly function.